What AI Can (& Cannot) Do Today & Why You Should Pay Attention

Originally published August 2023·Last updated April 2026

Your guide to understanding what AI does amazingly well in 2026 — and where it still falls short. No technical wizardry required.

First, let me make something clear: I am not an AI researcher. I wouldn’t survive a week as a software engineer. This isn’t an attempt to walk you through the tech. But you don’t need to be a civil engineer to understand what roads do to an economy.

Second, I have skin in the game. I’m building Magic Studio with a small but incredibly cool team, and millions of people use our products. The soul of what we build is the AI that gives everybody creative superpowers. So I’m confident I have an insider’s view on what this whole AI business is about.

Let’s dive in.

So What’s All the Fuss About?

Why are so many people still talking about AI? Is this like the dot-com bubble? Is this like Web3? Is this a solution looking for a problem that doesn’t even exist?

AI research has been going on for decades. Nuance built a speech recognition system based on machine-learned probabilities back in the 90s. Some of you might remember the Netflix Prize, which championed the idea that improving algorithms at scale could deliver real value.

So no, not new.

But has something changed? Yes — dramatically.

Something emerged from all those decades of effort to build systems that mimic intelligence. This “emergence” — where systems trained on limited data develop abilities that expand far beyond that training — kicked off a revolution starting around 2022. An AI model trained on text could suddenly answer math questions, write code, and reason through complex problems it was never explicitly taught to solve.

That had a lot of us excited. And some scared.

Back when I first wrote about this in 2023, many compared the AI buzz to Web3 hype. But I argued that AI actually looked more like the microprocessor — something that improves rapidly and underpins massive progress in nearly everything. That comparison has held up. By 2026, AI has gone from impressive party trick to essential infrastructure. Morgan Stanley estimates nearly $3 trillion in AI-related infrastructure investment will flow through the global economy by 2028. Companies aren’t asking “should we use AI?” anymore. They’re asking “how fast can we scale it?”

The improvements haven’t slowed down. If anything, they’ve accelerated. AI systems can now autonomously execute complex projects, reason through multi-step problems, and operate across text, images, video, voice, and sensor data simultaneously. The models today are multimodal — they don’t just read; they see, hear, analyze, and respond.

So great, there’s all this power. What can we do with it?

I’m going to break this down into three categories, mostly centered around the information AI processes and delivers:

- Text

- Visual Content

- Everything Else

The Word Machine

AI has been dealing with text for a while. We had our share of laughs with autocomplete. But the large language models of recent years changed everything. Trained on massive datasets at the scale of the internet itself, these models generate text that retains coherence across long passages — text that is often indistinguishable from what a human would produce.

Great! So we can throw out our keyboards and let machines do all the typing?

Well, not quite. But we’re a lot closer than we were.

The hallucination problem — where AI confidently outputs false information — is still very much alive. The best models in 2026 have gotten their hallucination rates down to around 3% on certain benchmarks. That’s a massive improvement from the 15–20% we were seeing two years ago. But here’s the catch: on harder questions — legal queries, medical facts, person-specific knowledge — even frontier models still hallucinate at rates of 10–18%. And a strange paradox has emerged: the more powerful “reasoning” models that think step-by-step sometimes hallucinate more on factual questions than simpler models.

The reason this happens is critical to understanding what AI is doing.

AI generates a coherent, relevant text construction that matches the request and looks right based on the vast amount of text it has seen. But truth is not an explicit ingredient. The model doesn’t have a barometer for truth — it has a barometer for plausibility. OpenAI published research in 2025 that crystallized this: hallucinations persist because training and evaluation procedures reward guessing over acknowledging uncertainty. The models are literally incentivized to bluff rather than say “I don’t know.”

But the story has gotten much more interesting since I last wrote about this. We’ve moved from chatbots to what the industry calls agentic AI — systems that don’t just answer questions but plan, decide, and act. These agents can perform competitive research, generate marketing campaigns, manage customer support workflows, run financial forecasts, and automate operations across entire departments. This isn’t just text generation anymore. It’s delegation.

So what can you do with all this powerful text AI today?

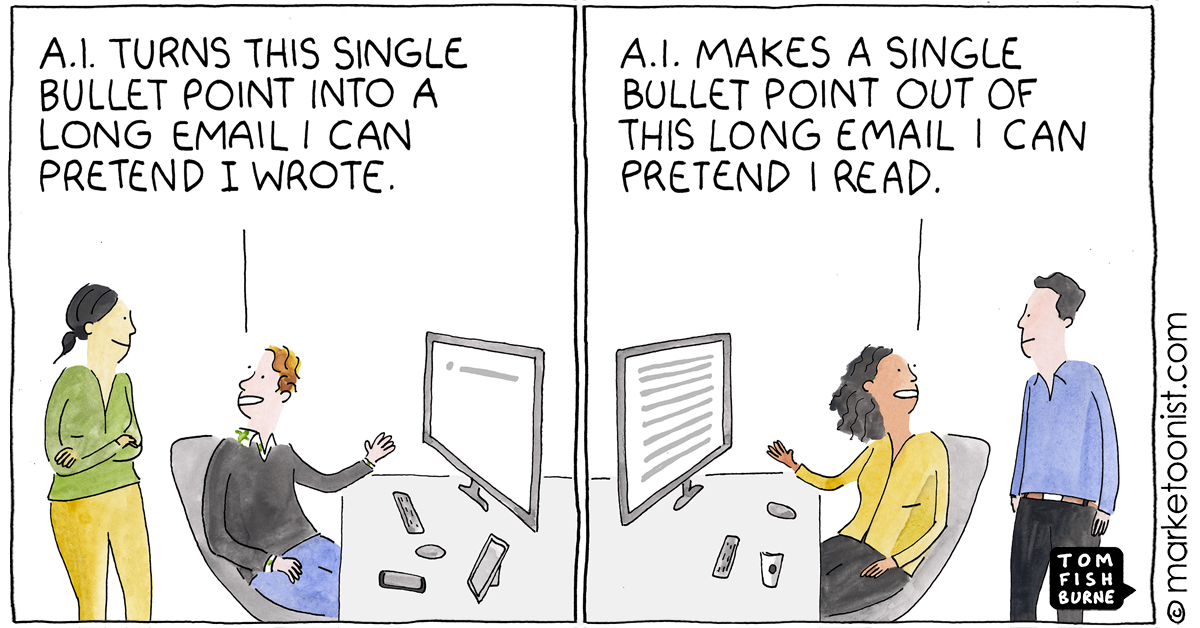

For those who need to create text — whether it’s marketing copy, emails, reports, or code — AI is no longer just a sidekick. It’s a co-pilot. The gap between humans working with AI and humans working without it has become a measurable competitive disadvantage. AI-assisted writers and coders are dramatically more productive.

For those processing information — building chatbots, knowledge bases, research tools — the picture is more nuanced but vastly improved. Retrieval-augmented generation (RAG), where models pull from verified sources before answering, has become standard practice. The best systems now cite their sources and let you verify. The wild-west days of AI just making things up are being tamed, though not eliminated.

All in all, you should be using AI as part of your creative writing, coding, research, and information delivery workflows.

Start today. Things are great, and they’re getting better fast.

What You See Is What You Get

This is closer to home for me, with all the things we’re building at Magic Studio.

The idea that visual information can be processed coherently by AI is relatively recent compared to text. But it has leapfrogged other areas in capturing the popular imagination. Perhaps because our visual experiences are such a big part of who we are and how we interact with the world.

What AI can do with visual information falls into roughly three categories:

- Classify — understand and categorize images against other information

- Generate — create visual content from text or image inputs

- Map — create layers of information over images (depth, segmentation, color, patterns)

In 2026, all three of these have matured dramatically.

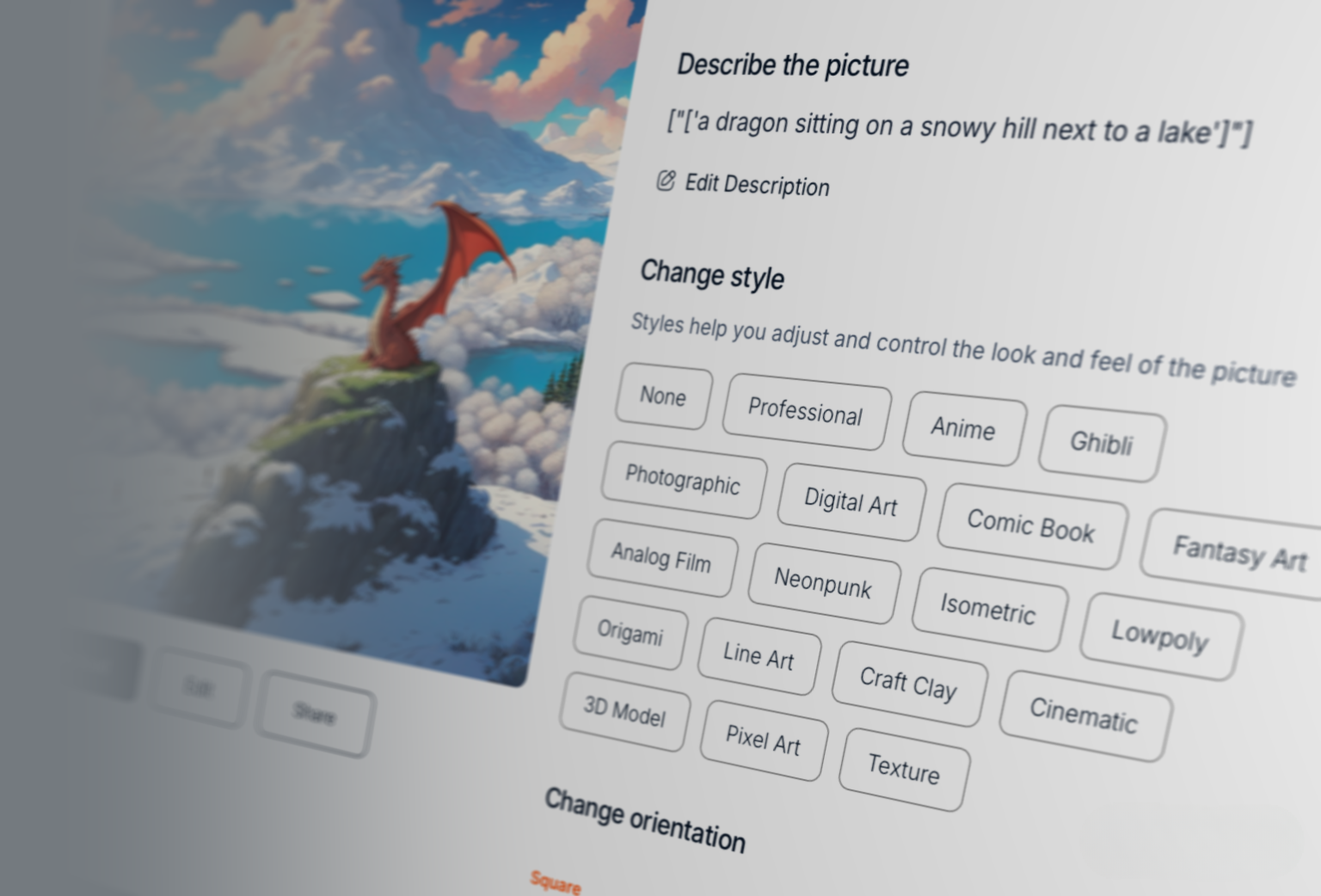

Like text models, visual models can depart from expected truth and misinterpret input. But outcomes in the visual space are not so binary — this flexibility means the truly useful possibilities are much wider. For example, these are all perfectly acceptable creations that fit the input a dragon sitting on a snowy hill next to a lake:

dragon sitting on a snowy hill next to a lake in the style of Ghibli StudiosBut how many ways can you say the same thing? Would any of them feel different? That is the expansive promise of visual AI.

AI processes visual information in a few different ways. One is the process of taking sets of pixels and correlating them with text that is “nearby” — captions, alt-text, titles on the page. This creates a virtual “space” on which you can map images to be near each other when they are similar.

A second way is by changing the information in each pixel toward a direction where the pixels get closer to the idea that needs to be represented. This is loosely the process of diffusion — start with random noise and end up with something coherent. While it’s hard to visualize a process that goes from random noise to something meaningful, think of it as the opposite of just smudging a picture:

A third way is to map other dimensions or parameters over the pixels — color over a black and white image, depth information over a 2D image, patterns across a scene. In reality, a lot of these approaches intermingle in any given model to give us the powerful things we see in action today.

Image generation is no longer a novelty. It’s a core production tool used by marketers, designers, filmmakers, and content creators worldwide. The 2026 models produce 4K output as standard, render legible text inside images (a notorious weak spot until recently), and maintain character consistency across multiple scenes. That last one is huge — you can now create a character and have it appear recognizably across an entire campaign without manual editing.

The creative possibilities have exploded. Multiple models from companies like OpenAI, Google, Adobe, Midjourney, and others compete fiercely on quality, and creators increasingly mix and match tools rather than committing to a single platform. Adobe Firefly now brings together over 30 AI models in one creative environment, letting you generate with one model, refine with another, and edit with professional tools — all in the same workflow.

Today you can reliably use AI to edit images with precision that would have required expert Photoshop skills just two years ago. Erasing objects, swapping backgrounds, extending scenes, upscaling resolution — these are production-ready workflows now, not experiments. The most prudent products give users simple, intuitive inputs to guide the AI, making creative expression accessible without a barrier of skill or knowledge.

With text input for generating visual results, it’s important to understand that our visual cognitive space is much more nuanced and varied. If you say apple and I say apple, while we both understand roughly the same thing, the pictures in our heads may be very different. In visual creative expression, the creator’s intent of what an image must look like is quite important — and giving room for iterative movements in that direction is critical. While AI model inputs are typically technical, it’s important to distill that into usable, relevant, and friendly inputs for users.

And then there’s video.

When I wrote about this in 2023, I said video AI would progress “correspondingly slowly” compared to images. I was right — but the pace of the broader field was so fast that “slowly” still meant revolutionary progress. AI video generation in 2026 is genuinely production-ready for social media, digital marketing, and content creation at scale. Tools like Runway, Kling, and Google’s Veo produce clips that are often indistinguishable from professionally shot footage on mobile screens. Character consistency across shots is largely solved. The “uncanny valley” of stiff AI movement has receded dramatically.

The Sora story is instructive. OpenAI launched its text-to-video model to extraordinary hype in late 2024 — and then shut it down in March 2026, hemorrhaging $15 million per day in compute costs against minimal revenue. Sora’s death wasn’t the end of AI video. It was the end of the hype-funded era and the beginning of a more sustainable market. The tools that survived are the ones building real businesses around genuinely useful capabilities.

That said, AI video is not yet replacing cinematographers and film crews for narrative filmmaking. The consistency, character control, and directorial precision required for long-form content still demands human expertise. But for everything else? The tools are already good enough.

Audio has made enormous strides too. AI can now generate synchronized sound effects, dialogue, and ambient audio alongside video in a single pass. Voice synthesis has become remarkably natural. The convergence of image, video, and audio into unified multimodal systems is one of the defining technical shifts of 2026.

Absolutely amazing products for creative expression are here today. The upside of putting creative visual tools into everyone’s hands — liberating creative work from barriers of skill and knowledge — is already showing up in ways we couldn’t have imagined even two years ago.

Everybody should have creative AI visual tools in their workflow today. A lot of the basic tools are free, so you can have fun and switch to more powerful versions for serious work.

And Then There’s Everything Else

The “everything else” might seem dismissive to those working on all the other applications of AI. But hear me out.

This is the class of AI that is perhaps the most pervasive and valuable. In one crucial way these systems all look similar: they deal with input from physical sources — microphones, cameras, LIDAR sensors, industrial sensors, personal health monitors, environmental instruments. Hardware or physical input, often not originating from a human thought.

When your car drives itself, AI. When your smart speaker listens, AI. When a camera at a traffic light catches an offender, AI. When your phone’s camera detects a scene and adjusts processing, AI. When your fitness tracker detects an irregular heartbeat, AI.

What’s changed dramatically since 2023 is scale and integration. AI in this category has moved from isolated applications to embedded infrastructure. Cities are using AI for real-time optimization of transit systems. Hospitals are deploying diagnostic AI that works alongside doctors. Manufacturing lines use AI-driven quality control that catches defects the human eye would miss.

The other seismic shift is agentic AI — and this bridges across all three categories. These aren’t just systems that respond to commands. They anticipate needs and execute complex objectives with minimal human oversight. Think of it as the difference between asking a search engine a question and having a capable assistant who researches the topic, synthesizes findings, drafts a report, and puts it on your desk.

Agentic AI adoption is surging. Nearly half of companies are either deploying or assessing AI agents. Telecommunications, retail, healthcare, and financial services are leading the charge. The conversation has shifted from “what can AI do?” to “what should we let AI do on its own?” — which is why governance, trust, and oversight have become the central challenges of this era.

While this may be the most pervasive way AI makes the world better, it’s still not talked about a lot for three big reasons:

- It’s not sexy

- It doesn’t make for great stories

- Each individual problem doesn’t seem that large

But collectively? This is where the quiet revolution is happening.

What Next?

I often think we tell ourselves that a fascinating piece of technology is futuristic because the distance between today and the future makes it all believable and acceptable.

But with AI, it’s already here. It’s been here. And it’s getting better at a pace that outstrips nearly everything in our technological history.

The conversation has matured since I first wrote about this. In 2023, the question was “is this real?” In 2026, the question is “how do we deploy it responsibly at scale?” Companies are grappling with governance frameworks, data readiness, talent gaps, and the hard work of integrating AI into core operations rather than running it as a side experiment.

The hallucination problem hasn’t been solved — but the best systems now hallucinate at rates lower than some human benchmarks for factual accuracy, at least on certain tasks. Visual AI has gone from generating amusing curiosities to producing broadcast-quality content. Agentic systems are moving from pilot programs to full-fledged deployments. And the regulatory landscape — from the EU AI Act to a patchwork of U.S. state laws — is finally catching up with a technology that has been running ahead of the rules.

So make the most of it.

Everything about AI is changing rapidly, so don’t quote me without the context of time and proportion. But one thing I’m confident about: AI is not a bubble waiting to burst. It’s an ingredient in whatever humanity cooks up next — and the recipe is only getting more interesting.